The Pruning Protocol: How Aggressive Weekly Cuts Produce 25% Efficiency Gains in Google Ads

Most Google Ads accounts are not fundamentally broken. They are bloated.

There is a meaningful difference between those two diagnoses. A broken account needs to be rebuilt. A bloated account needs to be cut. The cuts are faster, cheaper, and more reliably effective than rebuilding. And most accounts that look broken are actually just carrying three years of accumulated dead weight: keywords that never converted, creative that users stopped seeing, placements that consume budget without producing pipeline, and scheduling that spends money at 3 AM on researchers who will never buy.

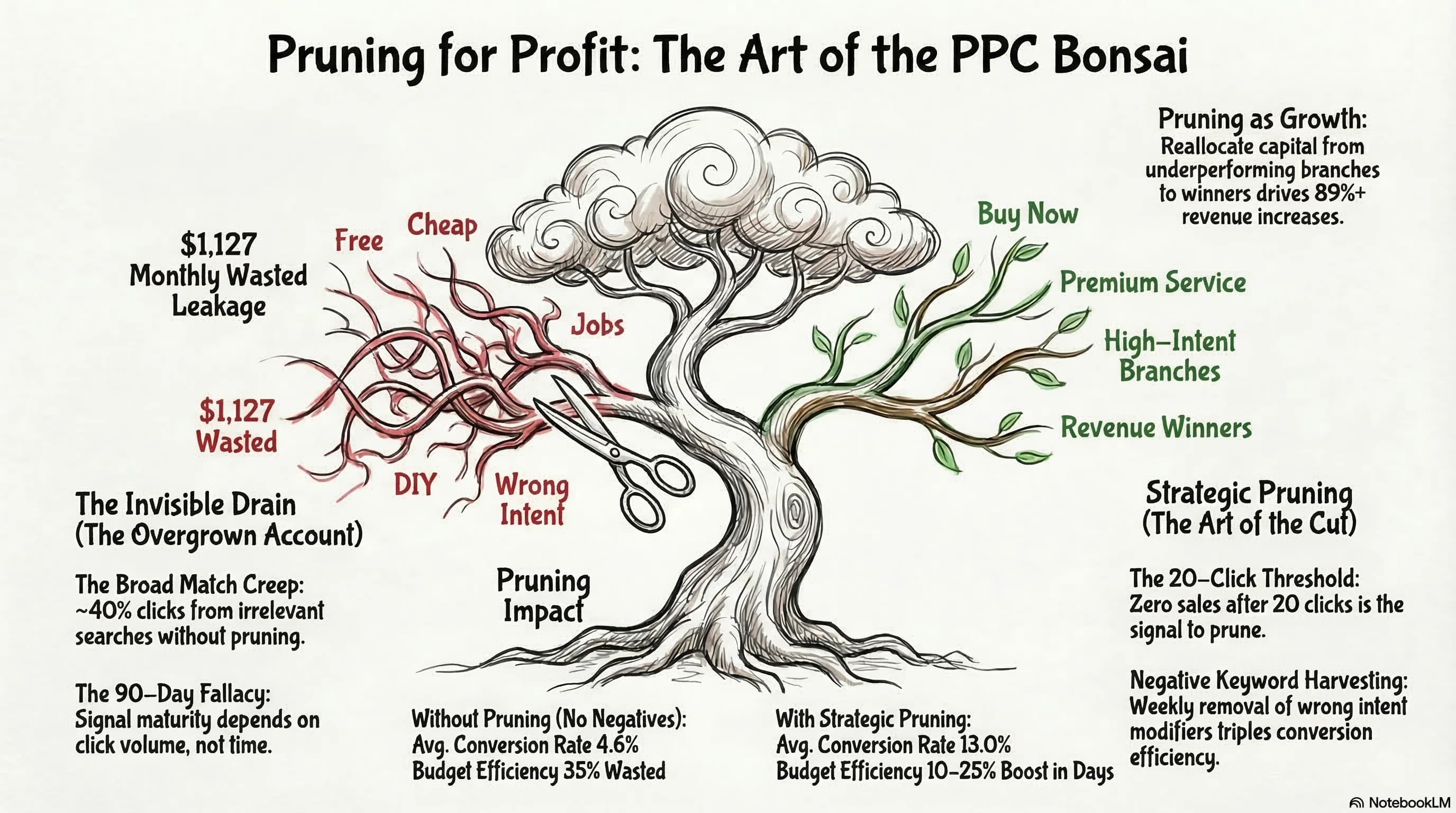

CPCs are rising 13% year over year. Budget waste from structural inefficiency runs 15 to 25% in the average account. The combination means that an account that was marginally efficient two years ago is now actively uncompetitive. The operators winning in this environment are not outspending their competitors. They are out-pruning them.

This is the weekly discipline that produces 10 to 25% efficiency gains without adding a dollar of budget: systematic, unsentimental removal of everything that is consuming spend without generating return, and reallocation of that capital to the elements that are already working.

For the signal architecture context that makes pruning decisions stick, The $1,127 Algorithmic Tax covers why the algorithm keeps spending on losers and what changes when you fix the conversion signal quality underneath the pruning work.

Why the Algorithm Keeps Spending on Underperformers

Before building the pruning discipline, you need to understand why Google's machine learning system does not solve this problem automatically. The answer has two parts.

First, Smart Bidding requires data density to function. The threshold for Target CPA is 30 conversions per month per campaign. For Target ROAS it is 50. Below those thresholds, the algorithm is not optimizing. It is exploring. And exploration means spending budget on auctions where the conversion probability is genuinely uncertain. In low-volume campaigns, the learning phase can extend from the standard 14 days into a 30-day-plus stalled state where 30 to 50% of budget goes to expensive guesses that produce no signal the system can learn from.

Second, the system is not incentivized to stop spending. It is incentivized to find impressions and clicks. When conversion data is sparse, it falls back on proxy signals: auction competitiveness, query volume, historical CTR patterns. These proxies do not correlate with purchase intent. They correlate with traffic volume. The algorithm finds traffic. Your job is to define whether that traffic is worth buying.

The Broad Match evolution compounds this. Broad Match in 2026 interprets session history, location signals, and device context to predict intent. When the algorithm has not yet locked onto a clear conversion pattern, this contextual interpretation creates hidden waste by bidding on semantically adjacent queries that have no commercial value for your specific offer.

| System Behavior | Operator Reality |

|---|---|

| Auction-time signals evaluate device, search history, time since last visit | High-volume queries often lack commercial intent entirely |

| Broad match interprets session history to predict intent | Without conversion lock-on, this produces expensive guesses |

| Learning phase tests bid levels to find an optimal conversion path | Low volume traps campaigns in a 30-day exploration loop |

The pruning protocol works because it removes the noise that prevents the algorithm from locking onto signal. Every irrelevant keyword you remove, every junk placement you exclude, every exhausted creative you replace cleans the data environment the algorithm is learning from. You are not fighting the machine. You are giving it better material to work with.

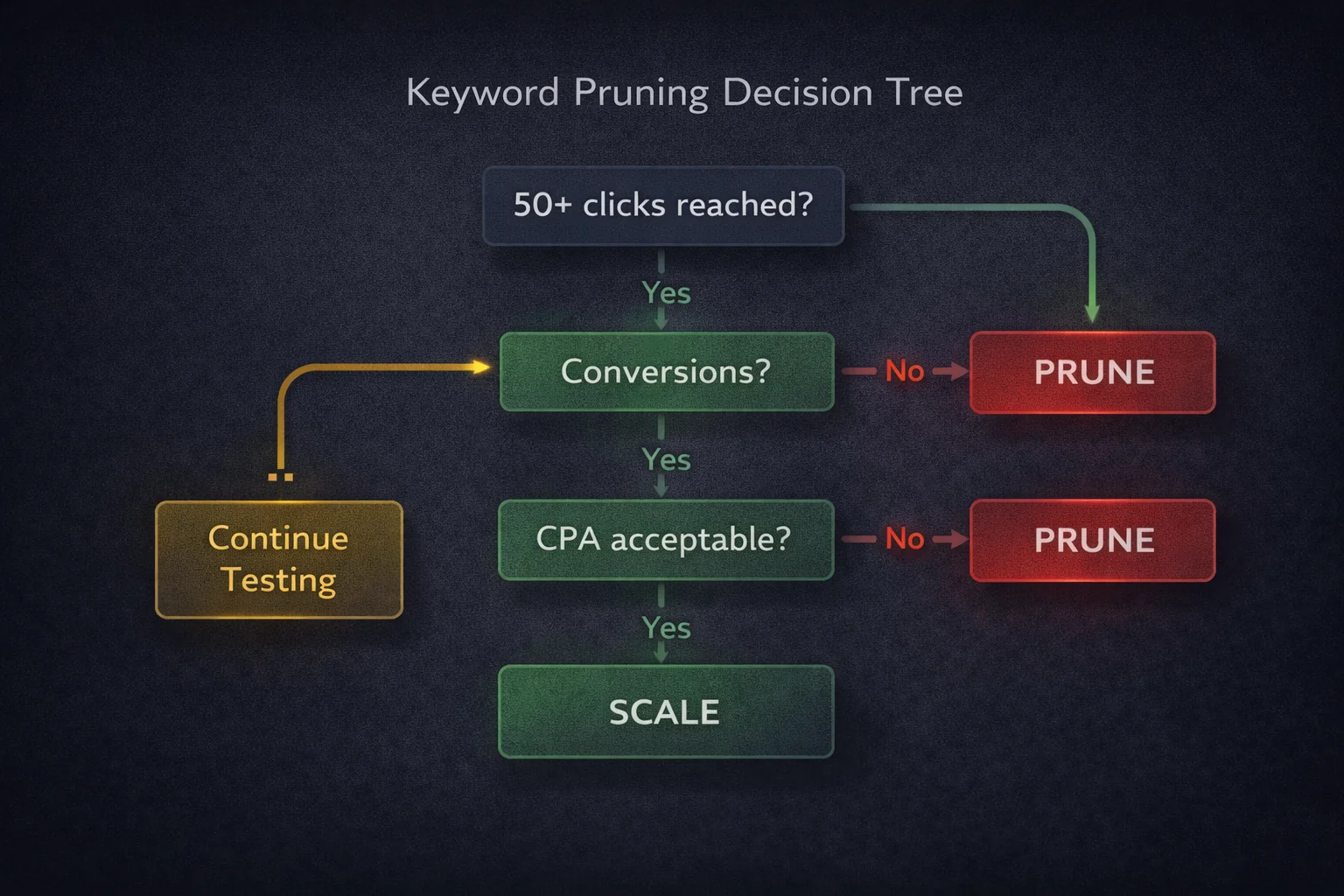

The 50-Click Rule: When a Keyword Is Out of Testing

Pruning decisions require statistical grounding. Cutting a keyword after five clicks is not a data-driven decision. Cutting it after 200 clicks with zero conversions is obvious but expensive. The threshold that balances statistical significance against budget efficiency is 50 to 100 clicks.

A keyword that reaches 50 to 100 clicks with zero conversions is out of testing. The probability that it converts on click 51 is not zero, but it is low enough that continued spend is not justified by the expected return. Pause it or add it as a negative depending on whether the query type is categorically irrelevant or just underperforming in the current context.

The second threshold is CPA-based: if a keyword's spend reaches 2 to 3 times your Target CPA with zero conversions, it must be pruned regardless of click volume. At that spend level, you have paid for a conversion that did not materialize. Continued spend at that rate will not produce a different outcome.

A Maintenance Gap occurs when an account relies on static negative keyword lists that were built once and never updated. Search behavior evolves continuously. New query patterns emerge as user language shifts, as competitors change their positioning, and as the algorithm expands into new semantic territory. A negative keyword list that is not reviewed weekly is a liability that grows more expensive over time.

For Broad Match campaigns specifically, your negative keyword list should be approximately three times longer than your keyword list. This ratio reflects the volume of adjacent intent territory that Broad Match will explore without explicit exclusion. If your keyword list has 50 terms, your negative list should have at least 150 to maintain effective traffic sculpting.

The weekly Search Term Review that enforces this is covered in depth in The Search Term Report Audit. The pruning protocol adds the decision layer on top of that review: what to cut, what to harvest, and what to monitor before making a final decision.

The 3 AM Problem: Scheduling as a Pruning Decision

Traffic volume is not purchase intent. This distinction becomes expensive when accounts run campaigns 24 hours a day without examining conversion rate variance by hour.

In professional services, the data consistently shows 4 to 6 times variance in conversion rates between peak business hours and late-night windows. Users searching at 2 AM are predominantly in research mode: reading comparisons, gathering information for a decision they will make in the morning, or browsing without immediate purchase intent. The click happens. The conversion does not.

The budget spent on those 2 AM clicks is not just wasted. It trains the algorithm on non-converting sessions, which pollutes your conversion signal data and nudges the system toward optimizing for the audience profile of late-night researchers rather than morning buyers.

Pull a conversion rate report segmented by hour of day and day of week. Identify the windows where conversion rate falls below 50% of your account average. Apply bid adjustments to reduce spend during those windows. For accounts with very clear patterns, consider dayparting to remove spend entirely from the lowest-converting windows and reallocating that budget to Monday through Wednesday morning windows where buyer intent is typically highest.

The capital recovered from off-peak pruning compounds. It does not just stop waste. It funds additional exposure during the windows that actually convert.

The Three Cannibalization Scenarios That Inflate Your CPCs

Budget waste from internal cannibalization is less visible than keyword waste but often more expensive. These three scenarios appear consistently in account audits and each one has a specific technical fix.

Brand vs. Remarketing Overlap

Running awareness campaigns and remarketing campaigns without mutual audience exclusions means you are bidding against yourself for the same user at different stages of their journey. The same person who saw your awareness ad yesterday is now in a remarketing audience. Without exclusions, both campaigns enter the same auction for their next search. Your CPC for that user rises because two of your campaigns are competing. Add your remarketing audiences as observation exclusions in your awareness campaigns to prevent this.

Sequential Remarketing Failure

A 30-day remarketing list includes everyone who visited your site in the last 30 days, including the subset who visited in the last 7 days. Without exclusions, users in your 7-day list are also receiving messaging designed for the 30-day list. They are being served both the urgent follow-up message and the softer re-engagement message simultaneously. Exclude your 7-day list from your 30-day campaigns so each audience segment receives only the messaging appropriate for their recency.

The Attribution Lie

Last-click attribution gives 100% of conversion credit to the final touch point before conversion. For branded search campaigns, this means the campaign that captures a user searching your brand name after they have already been through your funnel receives full conversion credit. The attribution model says the branded search ad drove the conversion. The incrementality reality is that the user was already going to convert and the branded ad intercepted them at the finish line.

Test this by pausing branded search in a defined geographic test market for two to four weeks and measuring whether total conversions in that market drop proportionally. If total conversions hold relatively stable, you are paying for passengers rather than drivers. The branded search spend is recovering attribution credit for organic conversions, not generating incremental ones.

The Ad Copy Audit: Catching Creative Fatigue Before the Data Drops

By the time CTR visibly declines, the creative is already dead. Users develop ad blindness to specific combinations of headlines and descriptions before the degradation shows up in performance data. The metric lags the reality by weeks.

The threshold that triggers replacement is frequency-based, not performance-based. When a user has seen a specific ad combination 8 to 10 times without converting, the creative is exhausted for that user segment. At scale, this means the creative combination needs rotation, not because the data is showing problems yet, but because the exposure pattern predicts that problems are coming.

Two automation approaches support this:

Run a Duplicate Ads script to scan for identical copy appearing across multiple ad groups. Duplicate ads split impression and conversion data between identical creative, which prevents either instance from reaching statistical significance and slows the algorithm's ability to identify winning combinations. Consolidate them into single ad groups or replace duplicates with genuinely differentiated creative.

For Search Partners and Display Network expansion within search campaigns, apply a 14-day performance window. If these networks do not meet your CPA targets within 14 days of campaign launch, disable them. Search Partners and Display expansion are primary waste drivers for B2B accounts and high-intent service businesses. The default setting is enabled. The default is usually wrong.

The Weekly Audit Cadence

The efficiency gains from pruning are not one-time. They are a function of the cadence at which waste is identified and removed. An account reviewed monthly loses four weeks of budget to patterns that a weekly review would catch in seven days. This is the operational rhythm that maintains capital efficiency.

Daily Checks

Policy violations and billing issues must be addressed the same day they appear. A suspended campaign or a billing hold that runs for 48 hours without detection disrupts learning phases and wastes runway.

Budget pacing tells you whether you are on track to spend your daily budget appropriately. If a campaign is limited by budget but CPL is stable and within target, raising the daily budget by 20% is justified. If CPL is above target, restricting geographic targeting to your highest-converting areas is more effective than raising budget. Spending more money on a poorly performing campaign does not improve performance.

Check that your conversion tags are firing. A broken conversion tag running at $150 per day produces $4,500 per month of blind optimization: the algorithm is running, spending, and making bid decisions with no conversion data to guide it. This is one of the most expensive silent failures in Google Ads management. A daily tag health check costs 60 seconds and prevents this entirely.

Weekly Pruning

Filter the Search Terms Report for queries with $50 or more in spend and zero conversions over the last 7 days. Add confirmed junk immediately as negatives. Do not batch these. The faster the response cycle, the less each pattern costs before it is removed.

Review Google's automated recommendations and dismiss the ones that do not serve your objectives. This is not contrarianism. It is calibration. Accepting recommendations indiscriminately trains the system to generate more recommendations, many of which serve Google's revenue targets rather than your efficiency goals. Dismissing low-quality recommendations signals to the system what guidance is actually useful.

Audit placement exclusions. Remove low-quality mobile app categories and junk domains from display and PMax placements. New irrelevant placements appear continuously as the algorithm explores. A weekly review catches them before they compound.

Monthly Deep Dive

Quality Score audit. A Quality Score of 8 versus 5 on the same keyword results in paying 30% less for the same ad position. Query the Quality Score distribution across your account and identify keywords where improving expected CTR or landing page experience would move them from the 4 to 6 range into 7 to 9. Each point of Quality Score improvement on a high-volume keyword compounds across every auction that keyword enters.

Landing page performance review. Improving page load time from 4 seconds to 1 second can reduce CPCs by up to 40% through the landing page experience component of Quality Score. Check Core Web Vitals for your highest-traffic landing pages monthly. Performance degradation from new scripts, plugins, or image additions accumulates between audits.

Tracking gap audit. Verify that Consent Mode v2 is active and that Enhanced Conversions are firing correctly. Without these, 20 to 40% of conversions become invisible to the algorithm in privacy-compliant environments. Making pruning decisions based on conversion data that is missing 30% of actual events produces systematic errors. You will pause keywords that are actually converting and scale keywords that are not.

The Capital Reallocation Model

Pruning is not cost reduction. It is capital reallocation. Every dollar removed from a zero-conversion keyword, an exhausted creative, a junk placement, or an off-peak scheduling window is a dollar available for the elements that are already working.

The Pareto principle applies consistently in Google Ads: 20% of keywords, creatives, and audiences drive 80% of profitable conversions. The inefficient 80% consumes budget, pollutes conversion data, and reduces the algorithm's ability to scale the efficient 20%.

The objective of the pruning protocol is to progressively increase the proportion of budget allocated to the proven 20% while systematically removing everything else. This does not require finding new winning strategies. It requires identifying what is already working and removing the friction that prevents it from receiving more budget.

That reallocation produces efficiency gains of 10 to 25% within the first two weeks of consistent execution, not because you found something new, but because you stopped funding the waste that was competing with what was already working.

For the full technical picture of how pruning decisions interact with bid strategy, conversion signal quality, and campaign architecture, Inside the PMax Black Box covers how these operational disciplines function at the campaign level within Performance Max governance.

Frequently Asked Questions

How do I know when to pause a keyword in Google Ads? The statistical threshold is 50 to 100 clicks with zero conversions, or spend exceeding 2 to 3 times your Target CPA with no conversion. Either condition indicates the keyword has had sufficient testing exposure to make a reliable assessment. Below 50 clicks, you do not have enough data to make a confident pruning decision. Above 100 clicks with zero conversions, continued spend is difficult to justify on any reasonable probability model. Pause rather than delete, so you retain the historical performance data.

What is creative fatigue in Google Ads and how do I prevent it? Creative fatigue is the degradation in ad performance that occurs when users have seen the same ad combination enough times that they stop responding to it. In Responsive Search Ads, the relevant threshold is approximately 8 to 10 exposures without a conversion for a specific user segment. By the time CTR visibly drops, the creative has been underperforming for weeks. Prevent it by rotating ad assets based on impression frequency rather than waiting for metric degradation, and by replacing Low-rated assets every three to four weeks rather than waiting for performance to visibly decline.

Why should I dismiss Google Ads recommendations instead of accepting them? Google's automated recommendations optimize for spend efficiency from Google's perspective, which does not always align with your business objectives. Accepting recommendations indiscriminately can raise bids beyond your target CPA, expand match types before your account has sufficient conversion data, or add keywords that are semantically relevant but commercially irrelevant to your offer. Review each recommendation against your campaign objectives before accepting. Dismissing recommendations that do not serve your goals is not a failure to cooperate with the platform. It is the exercise of the operator judgment the system cannot replicate.

What is the attribution lie in Google Ads branded search? Last-click attribution gives full conversion credit to the final touch before conversion. For branded search, this means the campaign capturing users who already decided to buy and searched your brand name receives credit for those conversions. The question is whether removing that branded campaign would reduce total conversions or simply shift the attribution pathway. Test it by pausing branded search in a geographic test market and measuring total conversion volume. If conversions hold, you are paying to intercept demand that would have found you regardless. This is called an incrementality test and it reveals whether branded spend is generating new revenue or recovering credit for organic conversions.

How much of a Google Ads budget is typically wasted on structural inefficiency? Industry research consistently places structural waste in the range of 15 to 25% of total spend for accounts without active pruning disciplines. WordStream research has found that small businesses waste approximately 25% of their PPC budget on avoidable structural problems. The waste comes from multiple sources simultaneously: irrelevant query matches, off-peak scheduling, exhausted creative, junk placement inventory, and internal cannibalization from overlapping campaigns and audiences. Eliminating each source individually produces modest gains. Eliminating all of them simultaneously produces the 10 to 25% efficiency improvement that consistent pruning disciplines deliver.

How often should I review my Google Ads Search Terms Report? Weekly at minimum, with two review windows: the last 7 days to catch recent waste before it compounds, and the last 30 days to surface patterns that develop gradually. For accounts spending more than $10,000 per month, a daily review of the top-spending zero-conversion queries adds meaningful protection. The cost of the daily review is 10 to 15 minutes. The cost of allowing a toxic query pattern to run for a week before it is caught can be hundreds or thousands of dollars depending on your CPC and volume.

Sources

- WordStream — Small Businesses Waste 25% of Their PPC Budget

- WhatConverts — PPC Costs Are Rising and That Makes Every Dollar More Fragile

- Google Ads Help — About Attribution Models

- Google Ads Help — About Data-Driven Attribution

- Search Engine Land — Why Creative, Not Bidding, Is Limiting PPC Performance

- Search Engine Land — PPC Management Checklist: Daily, Weekly and Monthly Reviews

- Negator — 7 Scenarios Where Manual Bidding Beats Google Smart Bidding

- ALM Corp — Google Ads Automatically Re-Enabling Paused Keywords: 2026 Guide