The $1,127 Algorithmic Tax: Why Most Google Ads Accounts Are Optimized for Waste

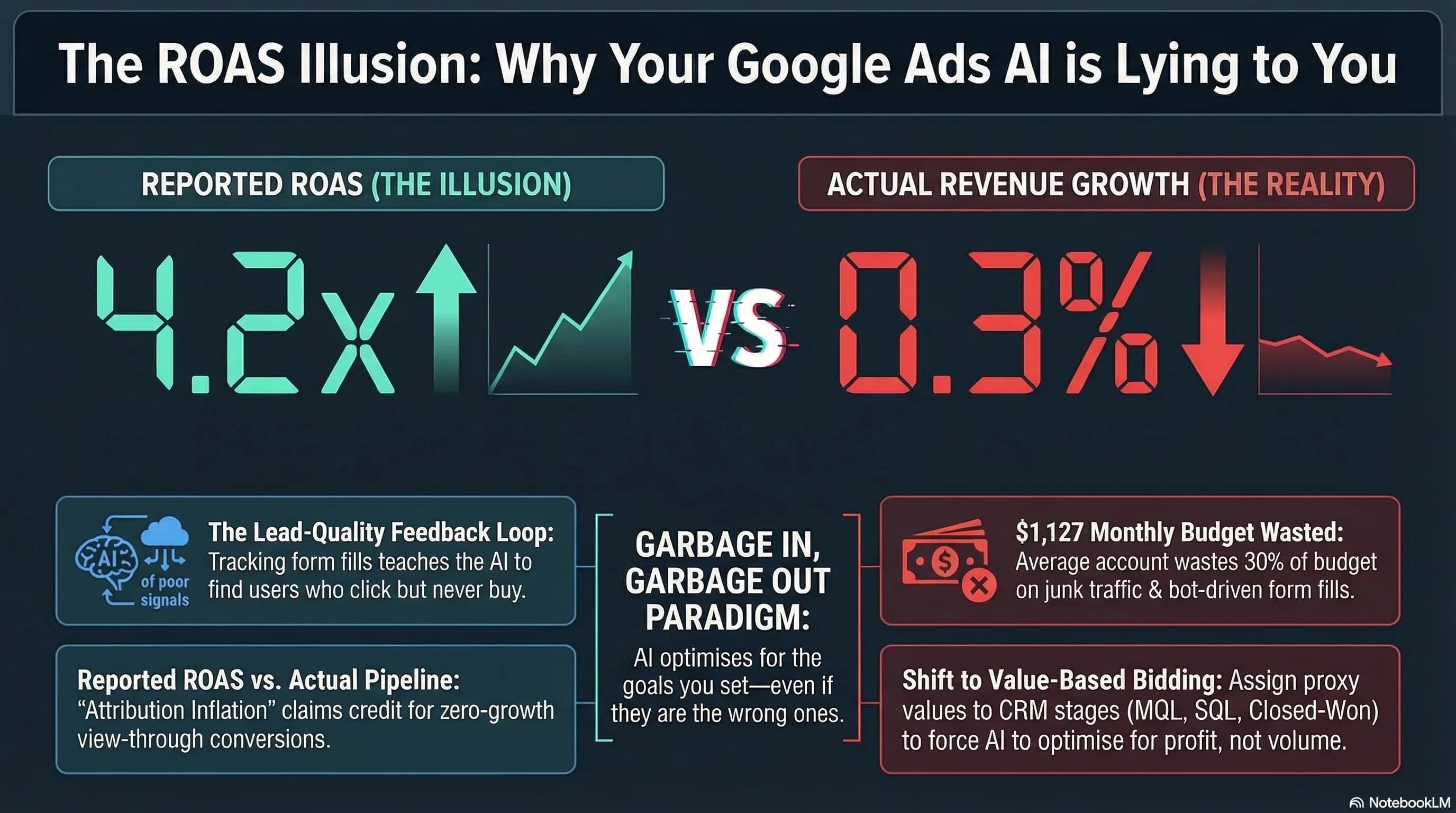

WordStream analyzed over 15,000 Google Ads accounts and found the median advertiser wastes $1,127 per month. Not because they are spending recklessly. Because they are feeding the algorithm the wrong instructions and the algorithm is executing those instructions perfectly.

Twenty-nine percent of those accounts recorded zero conversions over a 90-day period despite generating an average of 12,667 impressions. The spend happened. The traffic happened. The pipeline did not.

This is not a budget problem. It is a signal architecture problem. And the fix is not reducing spend. It is changing what you are asking the algorithm to optimize for.

If you want to understand how AI systems are reshaping the broader digital marketing environment your campaigns operate in, The SEO Roadmap for 2026 covers how search intent and AI-driven discovery are changing what happens before a user ever clicks an ad.

The Algorithm Is a Goal-Seeking Engine, Not a Strategy Partner

This is the most important reframe in modern Google Ads management.

Google's machine learning systems, particularly Performance Max, do not have judgment. They do not understand your business, your ideal customer, or what a good lead looks like. They are mathematical optimization engines designed to find the most efficient path to whatever objective you define.

If you define that objective as button clicks, the algorithm will find the cheapest button clicks available. Some of those will be bots. Some will be users who clicked by accident on mobile. Some will be competitors checking your landing page. The algorithm does not care. It hit the target you set.

This creates what looks like strong performance in the dashboard and stagnant pipeline growth in reality. High click volume, low cost per click, zero closed revenue. The dashboard says everything is working. The sales team has nothing to close.

The industry term for this is Garbage In, Garbage Out. But the more precise diagnosis is a mismatch between the signal you are sending and the outcome you actually want. Fix the signal and the algorithm works for you. Leave it broken and you are paying a monthly tax for the privilege of training Google's AI to find traffic that does not convert.

The Signal Architecture Model

Most accounts operate at one of three maturity levels. The gap between level one and level three is not complexity. It is the difference between optimizing for activity and optimizing for revenue.

Basic (Volume-Focused)

Optimizing for clicks, page views, and form fills with no downstream validation. This is the default state for most accounts and the highest-risk configuration. You are telling the algorithm that a click or a form submission is a success. The algorithm will find the cheapest way to generate those events, which systematically means lower-quality traffic over time as it expands match types and audiences to hit volume targets.

Intermediate (Attribution-Focused)

Using Enhanced Conversions and GCLID-based lead tracking. This is a meaningful improvement. Enhanced Conversions improves match rates by hashing first-party user data and matching it back to Google's records, giving the algorithm better signal about who converted. GCLID tracking ties lead submissions back to specific ad clicks. The limitation is that this level still prioritizes top-of-funnel lead quantity. A fake email address that passed your form validation counts the same as a qualified prospect who booked a demo.

Advanced (AI-Ready)

Implementing Value-Based Bidding combined with Offline Conversion Tracking. This is where the algorithm becomes genuinely useful. Instead of treating all conversions as equal, you assign proxy values that reflect downstream revenue probability. An MQL might carry a value of $50. A sales-qualified lead carries $500. A closed deal carries $5,000. You import these values back into Google Ads via OCT as deals progress through your CRM.

The algorithm now understands that a higher cost-per-click is worth paying if the traffic profile matches your high-value converter segments. It stops chasing volume and starts chasing revenue quality. The bid strategy does not change. What changes is what you are telling the algorithm a conversion is worth.

Negative Keywords Are Not Waste Reducers. They Are Boundary Definitions.

In a machine-learning environment, Broad Match without defensive negative keyword management is effectively a donation to Google's revenue targets.

The algorithm's mandate is to spend your budget on clicks. Left unrestricted, it will expand audience definitions, match to loosely related queries, and prioritize terms where it can generate volume efficiently. Without guardrails, this expansion is systematic and inevitable.

Data from accounts with active negative keyword management shows a nearly 3x improvement in conversion rates compared to accounts without: 13% versus 4.6%. That gap is not because the managed accounts had better ads or better landing pages. It is because they defined the boundaries of where the algorithm was allowed to explore.

There are two distinct types of negative keyword management that serve different functions:

Evergreen Negatives are account-level exclusions that eliminate zero-intent traffic permanently. These are the searches that will never convert regardless of how well your ads or landing pages perform. Common categories include job seekers (jobs, careers, internship, hiring), price-sensitive browsers who will never buy at your price point (free, cheap, discount, DIY), and informational queries that belong in a content strategy, not a paid campaign (how to, Wikipedia, tutorial, guide).

Set these at the account level. They apply everywhere and require no ongoing maintenance.

Traffic Sculpting Negatives are campaign-level exclusions that prevent internal cannibalization. The most common and damaging version of this problem is Performance Max stealing branded search traffic. When a user searches your brand name, they already intend to find you. That click should be captured by a branded search campaign at low cost per click with high relevance. If PMax captures it instead, it claims credit for a conversion that cost significantly more and that the branded campaign would have won anyway.

The fix is adding exact-match brand terms as negative keywords at the PMax campaign level. This forces brand queries back to your branded search campaign where they belong and stops PMax from inflating its reported ROAS with traffic it did not generate.

| Negative Keyword Type | Purpose | Examples |

|---|---|---|

| Evergreen Negatives | Account-level exclusions for zero-intent traffic | jobs, free, DIY, cheap, internship, how-to, Wikipedia |

| Traffic Sculpting | Campaign-level to prevent internal cannibalization | Exact-match brand terms excluded from PMax campaigns |

The PMax Retargeting Bias and the Attribution Illusion

Performance Max now drives 45% of Google Ads conversions. It also has a structural tendency toward a problem that most operators never diagnose.

The algorithm seeks the path of least resistance to a conversion. The easiest conversions to find are users who were already going to convert: existing customers, recent site visitors, and branded searchers. These users have high purchase intent independent of your advertising. PMax finds them, serves them an ad, and claims credit for the conversion.

Your ROAS looks strong. Your cost per conversion looks efficient. Your actual new customer acquisition is flat or declining. This is the Attribution Illusion. The algorithm is finding the conversions that were already coming. It is not generating incremental growth.

Three technical interventions address this directly:

Brand Exclusions at Campaign Level

Apply brand term exclusions within PMax to force branded queries out to a dedicated branded search campaign. This prevents PMax from taking credit for conversions it did not influence.

Customer Match Lists as Seed Profiles

Upload your existing customer list from CRM as a Customer Match audience within PMax. This serves two purposes. First, you can explicitly exclude existing customers from acquisition campaigns so the algorithm stops optimizing toward people who have already bought. Second, when used as a seed audience with expansion enabled, it gives the algorithm a starting profile to find genuinely new users who resemble your best customers. The quality of your seed list determines the quality of the lookalike expansion.

Asset Rotation and Maintenance

PMax surfaces asset combinations dynamically and evaluates their performance continuously. Any asset rated Low in the Asset Details report for more than three weeks is actively dragging performance. The algorithm deprioritizes it, which reduces your effective reach and raises your effective CPM on the assets that are working. Replace Low-rated assets with new creative hypotheses every three weeks. This is not optional maintenance. It is the mechanism through which PMax learns what resonates.

High ROAS is easy to fake. Incremental growth is hard to find. The gap between the two is where most accounts are living.

Funnel Analytics: From the What to the Why

Standard web analytics tells you what happened. Conversion rates dropped. A funnel stage has high abandonment. Traffic from a specific campaign has low engagement. This is useful for identifying that a problem exists. It does not tell you why the problem exists or what to fix.

Funnel and experience analytics close that gap. Quantitative data shows you where users are leaving. Qualitative friction signals show you why. Rage clicks indicate a UI element users expect to be interactive but is not. Dead clicks indicate users are clicking on non-linked elements, suggesting they expect navigation that does not exist. Session recordings show you the exact path a user took before abandoning a form.

| Standard Web Analytics | Funnel and Experience Analytics | |

|---|---|---|

| Focus | Traffic sources and breadth | User struggle points and depth |

| Primary Metrics | Conversion rates, sessions, bounce rate | Friction signals, rage clicks, dead clicks |

| Operational Goal | Understanding what happened | Understanding why it happened |

| Outcome | Identifies problems | Diagnoses causes |

The practical framework for sophisticated operators is a three-stage funnel view where each stage has distinct success metrics:

Awareness (Top of Funnel): Educational intent queries, impression share, and brand search volume growth. Success here is not conversions. It is qualified traffic entering the consideration stage.

Consideration (Middle of Funnel): Engagement depth, micro-conversions (content downloads, demo requests, free tool usage), and time to next touchpoint. The question at this stage is whether users are moving forward or stalling.

Decision (Bottom of Funnel): Transaction quality, lead-to-close rate, and customer acquisition cost against lifetime value. This is where OCT data feeds back into your Google Ads signal architecture. Closed deals imported as high-value conversion events teach the algorithm exactly what a valuable customer looks like.

The AI-Readiness Audit Checklist

Run through this before drawing any conclusions about campaign performance. Most accounts that appear to be underperforming are actually operating on corrupted signal data, which means the optimization decisions being made are based on a distorted version of reality.

Repeat Rate Verification

Open the Conversions report in Google Ads and check the Repeat Rate column for your primary conversion actions. A repeat rate significantly above 1.0 means the same conversion is being counted multiple times per session. This inflates your conversion volume and teaches the algorithm that it is performing better than it actually is. The algorithm will raise bids toward traffic that appears to convert well but does not. Diagnose the cause (usually a misfired tag or a page that loads the conversion event multiple times) and fix it before making any bid strategy changes.

Asset Quality Rotation

In PMax campaigns, navigate to Asset Groups and open the Asset Details report. Filter for any asset rated Low that has been in that state for more than three weeks. Replace it. Do not pause and reactivate. Replace with genuinely new creative. The algorithm needs new hypotheses to test, not the same underperforming asset given another chance.

Signal Integrity Check

Verify that Enhanced Conversions are hashing customer data correctly. The hashing algorithm must be SHA-256. The critical requirement that most implementations get wrong: for Gmail addresses specifically, you must remove all periods and plus-sign suffixes from the username portion of the email before hashing. [email protected] must become [email protected] before the hash is generated. Google normalizes Gmail addresses this way internally. If you hash the unnormalized version, the match fails and you lose attribution on those conversions.

CRM Bridge Alignment

Verify that your Offline Conversion Tracking import is mapped to meaningful milestones, not just final closed-won status. Google supports a 90-day upload window for GCLID-based conversion imports. If your sales cycle is longer than 90 days, you cannot rely on closed-won as your only OCT signal. You need mid-funnel milestones: demo completed, proposal sent, contract review stage. These carry lower proxy values but maintain a consistent signal flow into the algorithm throughout your sales cycle.

Budget Headroom

Check that your daily budgets are set to at least 10 to 20 times your Target CPA. This is not a suggestion. A daily budget that caps out before the algorithm has explored enough auction variance will cause erratic bidding behavior and prevent the smart bidding models from stabilizing. If your Target CPA is $100 and your daily budget is $150, the algorithm cannot function. It will hit the cap early, stop bidding, restart the next day, and never accumulate enough data to learn effectively.

Algorithm Observability: What Sophisticated Operators Actually Do

The operators who consistently outperform in Google Ads have moved past campaign management. They are practicing what I call Algorithm Observability: the systematic monitoring of how your data signals are influencing machine behavior in real time.

You are no longer managing bids. You are an architect of signals. The bids are a consequence of the signals you provide. If you want different bids, you need different signals. If you want the algorithm to find different customers, you need to show it different examples of what a good customer looks like.

This means your highest-leverage work is not in the Google Ads interface. It is in your CRM configuration, your conversion tracking setup, your customer data quality, and your feedback loop between closed revenue and campaign optimization. The interface is just the place where you verify that the signal architecture you built is producing the outputs you intended.

Most accounts discover, when they run a proper signal audit, that 15 to 30% of their spend is allocated to traffic they never intended to buy. Not because of poor targeting choices, but because the conversion signals they set up years ago are still running on configurations that made sense then and do not reflect how their business works now.

Frequently Asked Questions

Why is my Google Ads ROAS high but my actual revenue is flat? This is almost always the Attribution Illusion. Performance Max and broad match campaigns preferentially find existing customers and branded searchers who were already going to convert. They generate conversions that look real in the dashboard but represent traffic that would have converted anyway. The algorithm claims credit and reports strong ROAS while your new customer acquisition stays flat. Fix it by adding brand exclusions to PMax, uploading existing customer lists for suppression, and implementing Offline Conversion Tracking so the algorithm sees downstream revenue quality, not just top-of-funnel form fills.

What is Value-Based Bidding and when should I use it? Value-Based Bidding tells the algorithm that different conversions have different worth. Instead of optimizing for conversion volume, it optimizes for conversion value. You assign proxy values to different conversion events based on their downstream revenue probability. An MQL is worth $50. A sales-qualified lead is worth $500. A closed deal is worth $5,000. The algorithm bids more aggressively for users likely to become high-value conversions. Use it when you have reliable CRM data and can quantify the revenue value of different lead stages.

What is Offline Conversion Tracking and why does it matter? Offline Conversion Tracking imports downstream CRM data back into Google Ads after the initial click. When a lead that clicked your ad closes as a customer six weeks later, you import that closed deal back into Google Ads with the original GCLID. The algorithm learns which traffic profiles actually produce revenue, not just which ones submit forms. This is the most powerful signal upgrade available in Google Ads and most accounts are not using it.

How do negative keywords improve conversion rates? Negative keywords define the boundaries of where the algorithm can explore. Without them, broad match and Performance Max will systematically expand into tangentially related queries that generate clicks but not conversions. Accounts with active negative keyword management show conversion rates of roughly 13% versus 4.6% for accounts without. The improvement is not from better ads. It is from eliminating the traffic that was never going to convert and concentrating spend on the traffic that will.

What causes double-counting in Google Ads conversion tracking? Double-counting happens when a conversion tag fires multiple times for a single user action. Common causes include the conversion tag placed on a page that reloads during the checkout process, a tag manager trigger configured to fire on all page loads instead of only the confirmation page, or a thank-you page that is accessible without completing a form. Check your Repeat Rate in the Conversions report. Anything significantly above 1.0 indicates a double-counting issue that is corrupting your optimization signals.

How often should I update Performance Max assets? Replace any asset rated Low in the Asset Details report after three weeks in that status. Do not wait longer. The algorithm is already deprioritizing that asset, which reduces effective reach and raises effective CPM on the assets performing well. Replacing it gives the algorithm a new hypothesis to test and maintains the learning velocity that PMax requires to perform. Think of asset rotation as the creative equivalent of bid experimentation. You are testing hypotheses, not running a static campaign.

Sources

- WordStream — Most Google Ads Accounts Waste $1,127 a Month, Study of 15K Accounts Finds via PPC Land

- Google Ads Help — Enhanced Conversions for Leads: Setup and Hashing Requirements

- Google Ads Help — Offline Conversion Tracking with GCLID

- Google Ads Help — About Audience Signals for Performance Max Campaigns

- Spider AF — Machine Learning in Google Ads: How It Really Works

- Ajala Digital — Understanding the Effectiveness of Broad Match in Google Ads

- Ratan Jha Digital — Your Google Ads AI Is Optimizing on Garbage Data. Here's How to Fix It